Practically every TV and Film production uses CG these days, but a fully digital performance takes it to another level. Seth MacFarlane’s “Ted” is one of them, and the tech department at Fuzzy Door’s production company has built a suite of on-set augmented reality tools called ViewScreenturning this potentially awkward process into an opportunity for collaboration and improvisation.

Working with a CG character or environment is difficult for both actors and crew. Imagine talking to point blank range while someone is doing off-camera dialogue, or pretending a tennis ball on a stick is a bus coming into the landing bay. Until the entire production takes place on a holodeck, these CG elements will remain invisible, but ViewScreen at least allows everyone to work with them on camera, in real time.

“It dramatically improves the creative process and we’re able to get the shots we need much faster,” MacFarlane told TechCrunch.

The usual shooting process with CG elements takes place almost entirely after the cameras are turned off. You shoot the scene with a stand-in for the character, whether it’s a tennis ball or a mocap-rigged performer, and give actors and camera operators cues and framing for how you expect them to act. You then send your footage to the VFX people, who send back a rough cut, which then has to be tweaked to taste or redone. It’s an iterative, traditionally performed process that leaves little room for spontaneity and often makes these shoots tedious and complicated.

“Basically, it came from my need as a VFX supervisor to show the invisible thing that everyone is supposed to interact with,” said Brandon Fayette, co-founder of Fuzzy Door Tech, a division of the production company. “It’s very difficult to film things that have digital elements, because they don’t exist. It’s difficult for the director, the camera operator has trouble framing, the blunders, the lighting people can’t get the lighting to work properly on the digital element. Imagine being able to actually see the fantastic things on the set, in the daytime.”

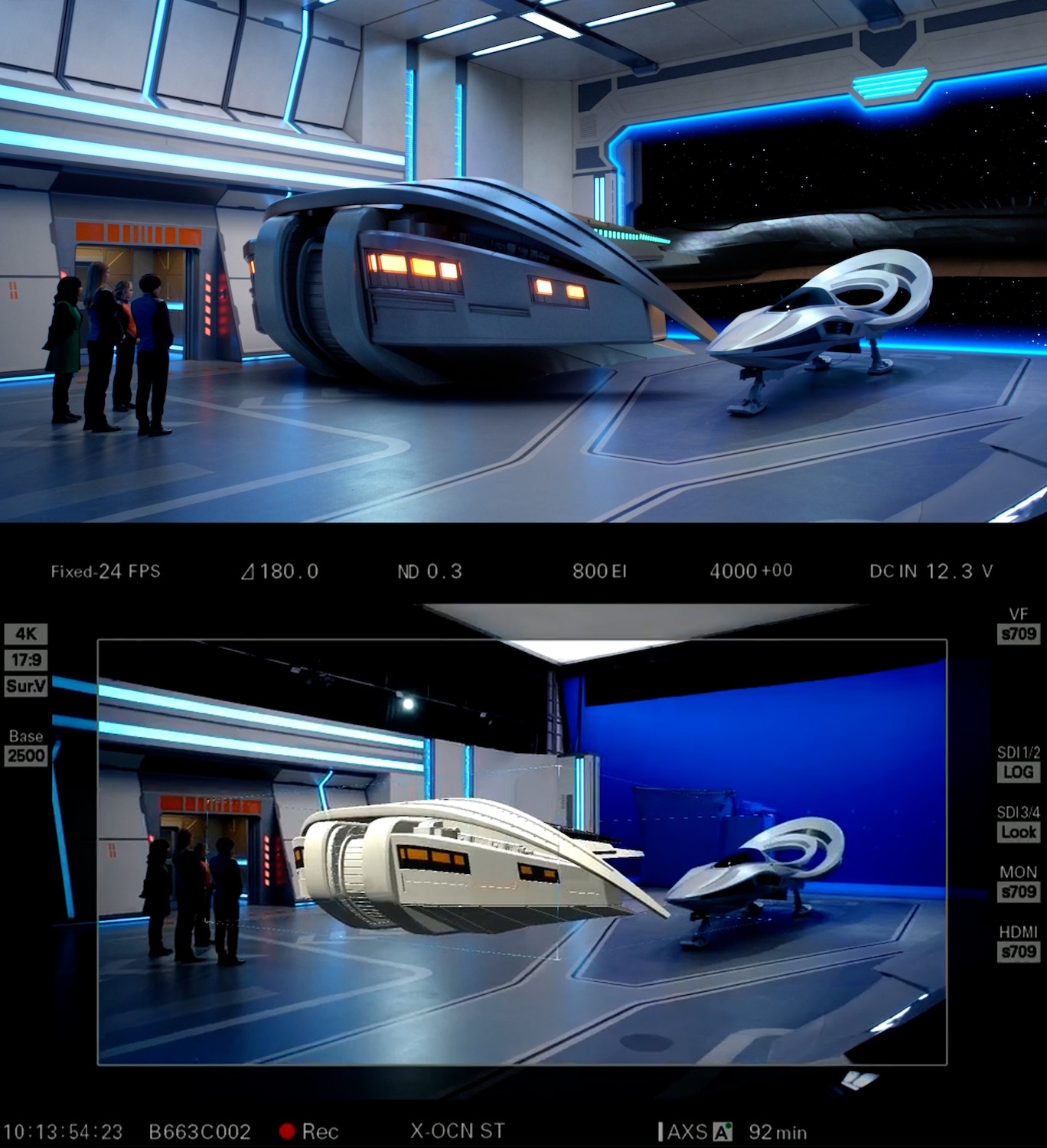

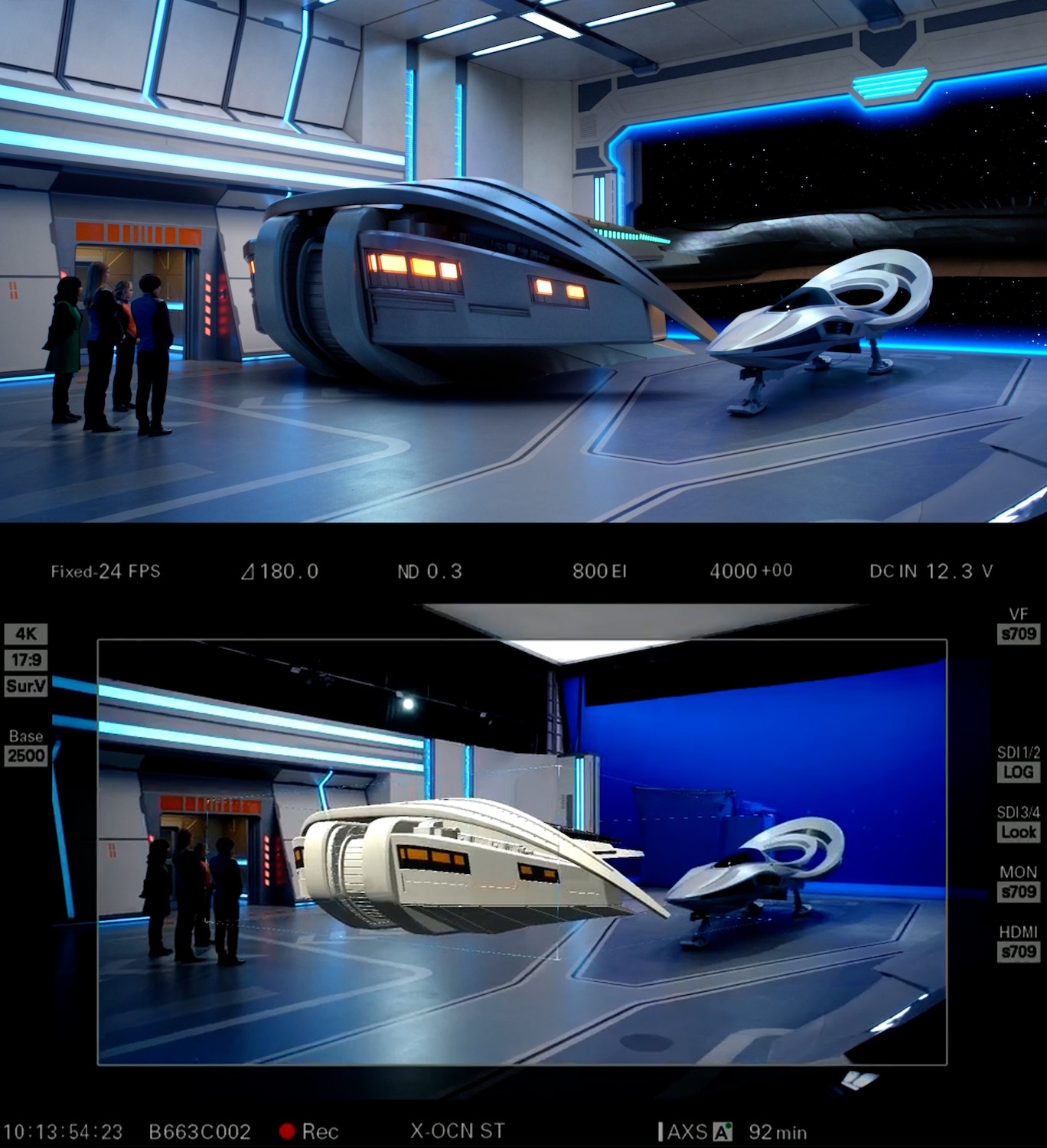

Image Credits: Fuzzy Door Tech

You might say, “I can do that with my iPhone right now. Have you ever heard of ARKit?” But even though the technology involved is similar – and in fact ViewScreen uses an iPhone – the difference is that one is a game and the other a tool. Sure, you can throw a virtual character into an ensemble in the AR app. But real cameras don’t see it. The on-set screens don’t show it. the voice actor doesn’t sync with it? the VFX crew can’t base the final shots on it — and so on. It’s not about putting a digital character in a scene, it’s about doing it while incorporating modern production standards.

ViewScreen Studio syncs wirelessly between multiple cameras (real ones, like Sony’s Venice series) and can integrate multiple data streams simultaneously through a central 3D compositing and positioning framework. They call it ProVis, or production visualization, a middle ground between before and after.

For a shot in “Ted,” for example, two cameras might have wide and close shots of the bear, which is controlled by someone on set with a gamepad or iPhone. His voice and gestures are done by MacFarlane live, while a behavioral AI keeps the character’s positions and gaze on target. Fayette showed me this live on a small scale, placing an animated version of Ted next to himself that included live face capture and free movement.

An example of ViewScreen Studio in action, with live footage on set below and the final shot above. Image Credits: Fuzzy Door Tech

Meanwhile, the cameras and computer put clean footage, clean VFX and a live composite both in the viewfinder and on the screens for all to see, all encoded and ready for the rest of the production process.

Elements can be given new directions or attributes live, such as waypoints or lighting. A virtual camera can pan across the screen, letting alternate shots and scenarios appear naturally. A path can only be displayed in the viewfinder of a moving camera so that the operator can plan its shot.

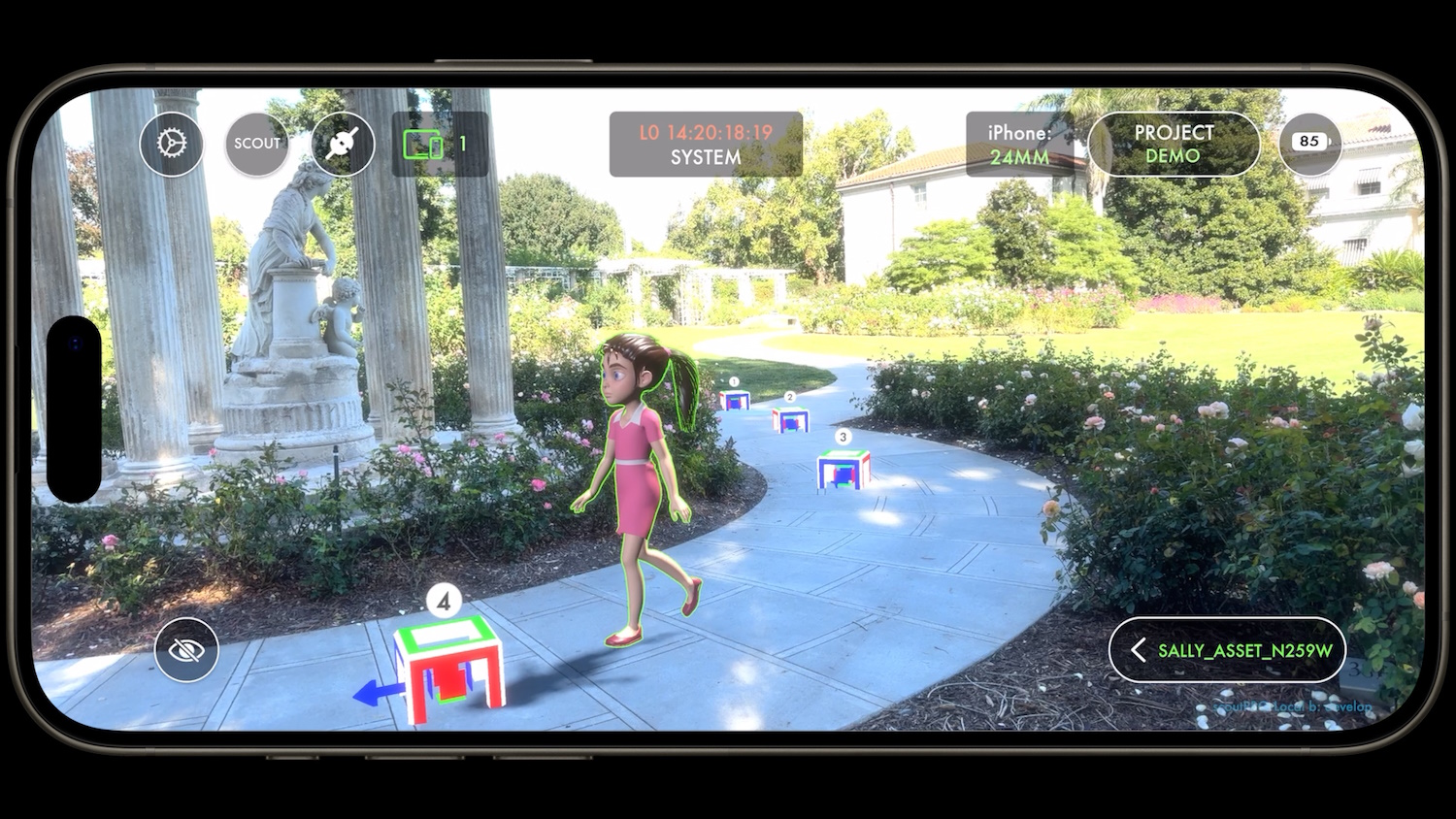

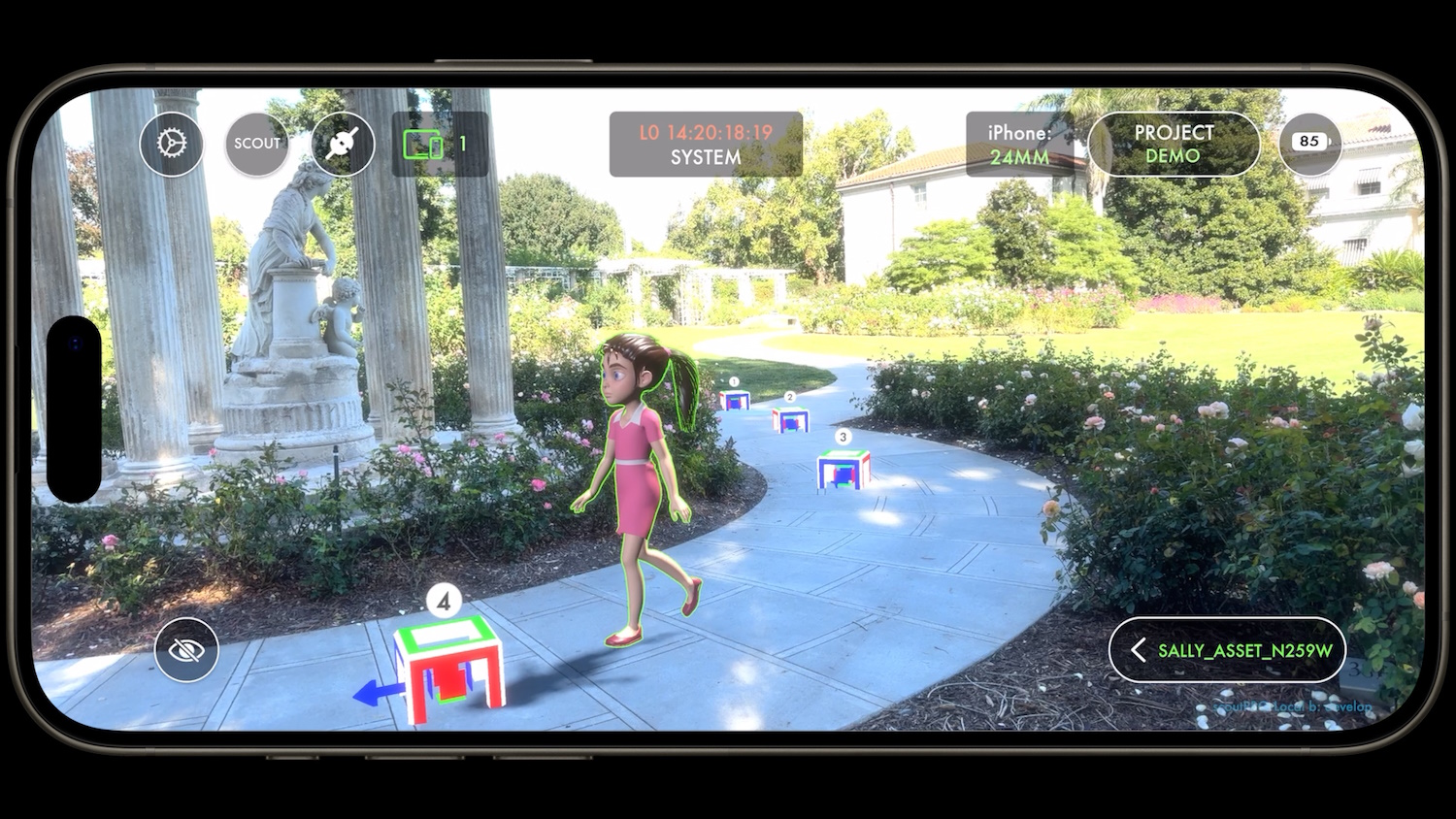

Examples of elements in a shot with a virtual character — the girl model will walk between the waypoints, which correspond to the real space. Image Credits: Fuzzy Door Tech

What if the director decides that the titular teddy bear Ted should get off the couch and walk? Or what if they want to try a more dynamic camera movement to highlight an alien landscape in ‘The Orville’? This is not something you could do in the pre-baked process commonly used for this material.

Of course, virtual productions in LED enclosures face some of these issues, but you’re dealing with the same things. You get creative freedom with dynamic backgrounds and lighting, but much of a scene actually has to be locked down tightly due to the limitations of how these giant sets work.

“Just to do a setup for ‘The Orville’ of a shuttle landing would take about seven and take 15-20 minutes. Now we get them in two takes and it’s three minutes,” Fayette said. “We found ourselves not only having shorter days, but trying new things — we can play a little bit. It helps eliminate the technical stuff and let the creative take over… the technical will always be there, but when you let the creatives create, the quality of the shots becomes much more advanced and fun. And it makes people feel more like the characters are real – we’re not staring into space.”

It’s not just theoretical — he said they shot “Ted” that way, “the entire production, for about 3,000 takes.” Traditional VFX artists eventually take over the final quality effects, but they aren’t used every few hours to render some new variation that might go straight to the trash.

If you’re in the business, you might want to learn about the four specific modules of the Studio product, directly from Fuzzy Door Tech:

- Tracker (iOS): A tracker that transmits an item’s location data from an iPhone mounted to a filmmaker’s camera and sends it to the Compositor.

- Compositor (Windows/macOS): Compositor is a macOS/WIN application that combines the video stream from a movie camera and position data from the Tracker into composite VFX/CG elements in a video.

- Exporter (Windows/macOS): The exporter collects and compiles frames, metadata, and all other data from Compositor to deliver standard camera files at the end of the day.

- Motion (iOS): Cast an actor’s facial animations and body movements live, on set, to a digital character using an iPhone. The movement is completely indicator-free and not convenient — no fancy equipment required.

ViewScreen also has a mobile app, Scout, to do something similar on location. This is closer to your average AR app, but still includes the kind of metadata and tools you’d want if you were designing a location shot.

Image Credits: Fuzzy Door Tech

“When we were scouting for The Orville, we used ViewScreen Scout to visualize what a spaceship or character would look like on location. The VFX supervisor would send me stills and I would give feedback immediately. In the past, this could take weeks,” MacFarlane said.

Importing official assets and animating them while scouting cuts time and cost like crazy, Fayette said. “The director, photographer, [assistant directors], we can all see the same thing, we can insert and change things live. For The Orville we had to get that creature moving in the background and we could bring the animation straight into Scout and say, “Okay that’s a little too fast, maybe we need a crane.” It allows us to find answers to scouting problems very quickly.”

Fuzzy Door Tech is officially making its tools available today, but has already worked with a few studios and productions. “The way we sell them, it’s custom,” explained Faith Sedlin, the company’s president. “Each show has different needs, so we work with studios, read their scripts. Sometimes they care more about the set than the characters — but if it’s digital, we can do it.”