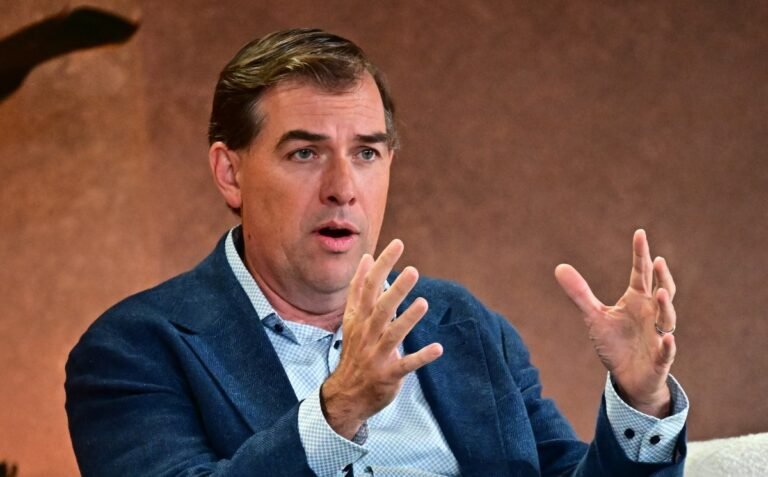

AWS CEO Matt Garman announced Amazon’s recent $50 billion investment in OpenAI, following its long-standing partnership. $8 billion investment in Anthropic, it’s the type of conflict of interest the cloud giant is used to handling.

Garman has worked at Amazon since he was a business school intern in 2005, before AWS launched in 2006, he told his audience HumanX conference to be held this week in San Francisco.

When asked about the inherent conflict of working closely with two AI modeling companies that are fierce (and, arguably, sometimes petty) competitors, he said that’s not a problem. Because AWS itself often competes with its partners, it has a lot of direct experience with such competition, he explained.

In AWS’s early years, it knew it couldn’t build every cloud offering, so the unit partnered with others.

“We also knew we would have to compete with our partners because the technology is interconnected,” Garman said. “So, for a long time, we’ve been building this dynamic for how we go to market with our partners,” he continued. “But we may also have first-party products that compete with them, and that’s fine, and we’ve promised them that we won’t give ourselves an unfair competitive advantage.”

Today, people are used to Amazon competing with those who sell on its cloud. Even one of AWS’s biggest rivals, Oracle, is selling its database and other services on AWS. But it was a radical idea in 2006, when tech partners took pains to never compete with the partners who helped them succeed.

But Amazon is hardly a pioneer in shedding investor loyalty and conflict-of-interest commitments in the wild world of money-grabbing AI. When Anthropic announced its latest $30 billion round in February, it included at least a dozen investors who also backed OpenAI. This included OpenAI’s main cloud partner, Microsoft.

Techcrunch event

San Francisco, California

|

13-15 October 2026

For AWS, making a huge investment in OpenAI to get its model for its customers (and as a technology development partner) was almost a matter of life and death. Both models were already available in Microsoft’s cloud, AWS’s biggest rival.

Cloud giants are also working to stay front and center by offering AI model routing services. These services allow their customers to automatically use different models for different tasks as a way to maximize efficiency and reduce costs. As Garman explained, one model might be ideal for programming, another for reasoning, and a cheaper model for easier tasks like code completion. “I think that’s where people are going to go,” Garman said.

This is also how Amazon, and Microsoft for that matter, will use their own homegrown models – that old competition-with-your-partners situation, again.

All is well in love and AI these days.