It’s Google Cloud Next in Las Vegas this week, and that means it’s time for a bunch of new types of instances and accelerators to appear on the Google Cloud platform. Aside from the new custom Arm-based Axion chips, most of this year’s announcements are about AI accelerators, whether they’re made by Google or Nvidia.

Just a few weeks ago, Nvidia announced the Blackwell platform. But don’t expect Google to offer these machines anytime soon. Support for high performance Nvidia HGX B200 for AI and HPC workloads and GB200 NBL72 Training for large language models (LLM) will arrive in early 2025. An interesting bit from Google’s announcement: The GB200 servers will be liquid-cooled.

This might sound like a bit of an early announcement, but Nvidia said its Blackwell chips won’t be publicly available until the last quarter of this year.

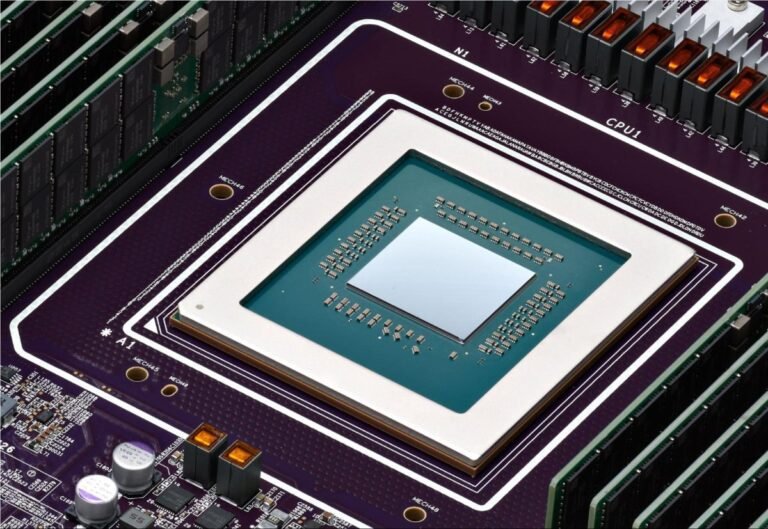

Image Credits: Frederic Lardinois/TechCrunch

Before Blackwell

For developers who need more power to train LLMs today, Google has also announced the presence of the A3 Mega. This case, which the company developed together with Nvidia, features the industry-standard H100 GPUs, but combines them with a new networking system that can deliver up to twice the bandwidth per GPU.

Another new A3 example is A3 confidential, which Google described as allowing customers to “better protect the confidentiality and integrity of sensitive data and AI workloads during training and inference.” The company has been offering for some time confidential computing services that encrypt the data used and here, once enabled, confidential computing will encrypt data transfers between the Intel CPU and the Nvidia H100 GPU via protected PCIe. No code changes are required, Google says.

As for Google’s own chips, the company on Tuesday made its Cloud TPU v5p processors — the most powerful of its homegrown AI accelerators yet — generally available. These chips feature a 2x improvement in floating point operations per second and a 3x improvement in memory bandwidth speed.

Image Credits: Frederic Lardinois/TechCrunch

All these fast chips need an underlying architecture that can keep up with them. So, in addition to the new chips, Google also announced on Tuesday new storage options optimized for artificial intelligence. Hyperdisk ML, now in preview, is the company’s next-generation block storage service that can improve model load times by up to 3.7x, according to Google.

Google Cloud is also launching a number of more traditional instances, powered by Intel’s fourth- and fifth-generation Xeon processors. The new mainstream C4 and N4 instances, for example, will feature fifth-generation Emerald Rapids Xeons, with the C4 focusing on performance and the N4 on price. The new C4 instances are now in private preview and the N4 machines are generally available today.

Also new, but still in preview, are C3 bare-metal machines, powered by older fourth-generation Intel Xeons, X4 memory-optimized bare-metal snapshots (also in preview), and Z3, Google Cloud’s first storage-optimized virtual machine which promises to deliver “the highest IOPS for optimized storage instances among leading clouds.”