SAN JOSE — “I hope you understand that this is not a concert,” Nvidia president Jensen Huang told an audience as large as the SAP Center in San Jose. So he introduced what is perhaps the complete opposite of a concert: the company’s GTC event. “You’ve arrived at a developer conference. There will be a lot of science describing algorithms, computer architecture, mathematics. I feel a very heavy weight in the room. suddenly, you’re in the wrong place.”

It may not have been a rock concert, but the leather jacket was worn by its 61-year-old CEO the third most valuable company in the world by market capitalization it certainly had quite a number of fans in the audience. The company started in 1993, with a mission to push general computing beyond its limits. “Accelerated Computing” became the rallying cry for Nvidia: Wouldn’t it be great to make chips and boards that were specialized, rather than general purpose? Nvidia chips give graphics-hungry gamers the tools they needed to play games at higher resolutions, higher quality, and higher frame rates.

It’s perhaps not a huge surprise that Nvidia’s CEO drew parallels with a concert. The venue was, in a word, very concert-like. Image credits: TechCrunch / Haje Kamps

Monday’s keynote was, in some ways, a return to the company’s original mission. “I want to show you the soul of Nvidia, the soul of our company, at the intersection of computer graphics, physics and artificial intelligence, all intersected inside a computer.”

Then, for the next two hours, Huang did a rare thing: He ran outside. Hard. Anyone who came to the keynote expecting him to pull a Tim Cook, with a slick, audience-focused keynote was bound to be disappointed. All in all, the keynote was tech-heavy, acronym-laden, and unapologetically a developer conference.

We need bigger GPUs

Graphics processing units (GPUs) are where Nvidia started. If you’ve ever built a computer, you’ve probably thought about a graphics card that goes into a PCI slot. That’s where the journey started, but we’ve come a long way since then.

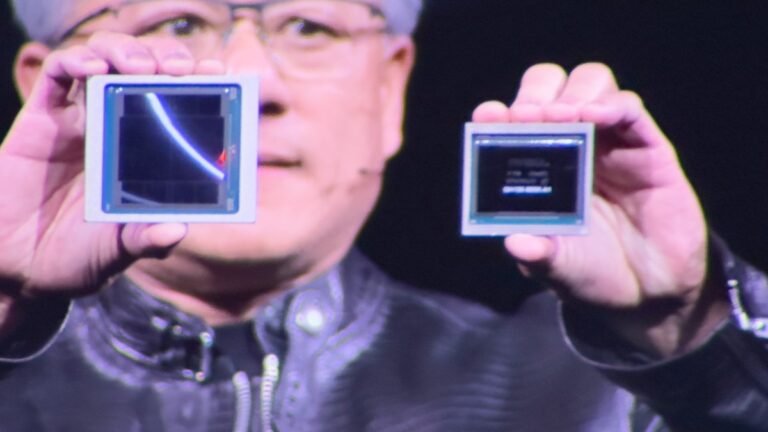

The company announced the all-new Blackwell platform, which is an absolute monster. Huang says the processor core was “breaking the limits of physics on how big a chip could be.” It uses combines the power of two chips, offering speeds of 10 Tbps.

“I keep about $10 billion worth of equipment here,” Huang said holding up a Blackwell prototype. “The next one will cost $5 billion. Luckily for you all, it gets cheaper from there.” Putting a bunch of these chips together can add up to some really impressive power.

The previous generation of AI-optimized GPUs was called Hopper. Blackwell is between 2 and 30 times faster, depending on how you measure it. Huang explained that it took 8,000 GPUs, 15 megawatts and 90 days to build the GPT-MoE-1.8T model. With the new system, you could only use 2,000 GPUs and use 25% of the power.

These GPUs are pushing a fantastic amount of data – which is a very nice segue into another topic Huang talked about.

What’s next

Nvidia introduced a new set of tools for automakers working on self-driving cars. The company was already a major player in robotics, but it doubled down with new tools for roboticists to make their robots smarter.

The company also introduced Nvidia NIM, a software platform aimed at simplifying the development of artificial intelligence models. NIM leverages Nvidia hardware as a foundation and aims to accelerate companies’ AI initiatives by providing an ecosystem of AI-ready containers. It supports models from a variety of sources, including Nvidia, Google and Hugging Face, and integrates with platforms such as Amazon SageMaker and Microsoft Azure AI. NIM will expand its capabilities over time, including tools for genetic AI chatbots.

“Anything you can digitize: As long as there’s some structure where we can apply some patterns, it means we can learn the patterns,” Huang said. “And if we can learn the patterns, we can understand the meaning. When we understand meaning, we can create it too. And here we are, in the generative revolution of artificial intelligence.”